Projects

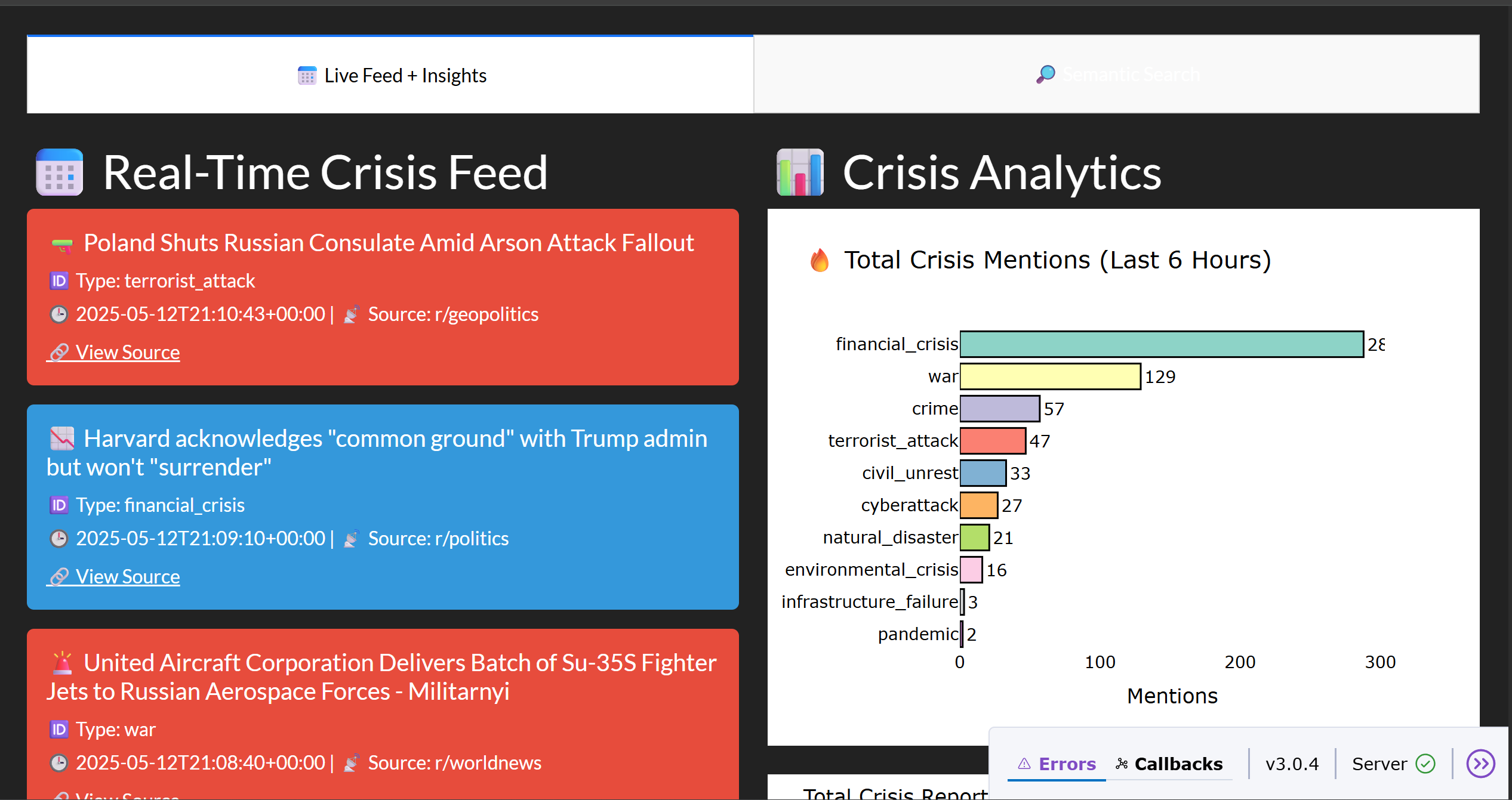

CrisisCast

Tech Stack: Kafka, Spark, MongoDB, FastAPI, Plotly

Developed a end-to-end real-time pipeline that ingests social signals from Reddit and Google, classifies posts using a fine-tuned LLM, and visualizes crises on a dynamic dashboard. Leveraged Kafka, PySpark, FastAPI, MongoDB, and Qdrant for scalable processing and semantic search.

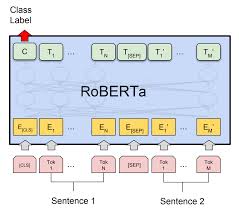

Parameter-Efficient Transformer

Tech Stack: PyTorch, Hugging Face, LoRA, Python

Fine-tuned RoBERTa-base on AG News using LoRA to train just 0.4% of parameters, achieving 92.3% accuracy in 3 epochs. Reduced GPU memory by 50% and enabled scalable deployment under 5 min/epoch.

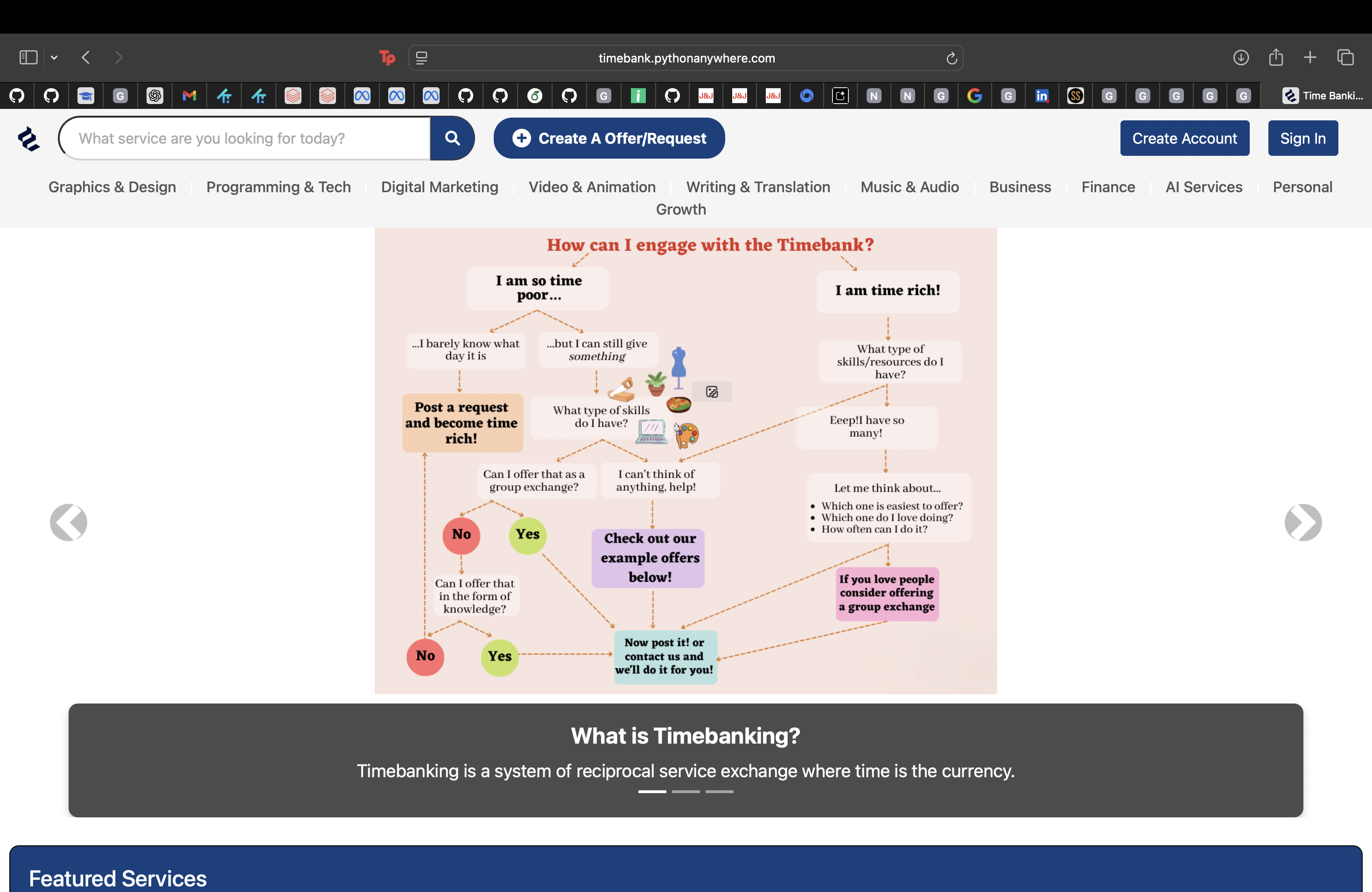

Time Banking

Tech Stack: Django, HTML, CSS, REST APIs, JavaScript, GitHub Actions

Built a Django-based service marketplace where users exchange time and quality as currency. Designed a responsive UI using HTML, CSS, and JavaScript for cross-platform use. Implemented RESTful APIs for backend logic and integrated CI/CD pipelines via GitHub Actions, achieving 85% test coverage. Followed Agile/Scrum practices to ensure reliable delivery and iterative development.

Efficient Deep Learning with Narrow ResNet-18

Tech Stack: PyTorch, CIFAR-10, ResNet, Cutout, Cosine Annealing

Built a compact and high-performing image classification model using a width-scaled ResNet-18 architecture on the CIFAR-10 dataset. Optimized for efficiency by reducing model size under 5M parameters and incorporating Cutout augmentation, label smoothing, and cosine annealing learning rate scheduling. Achieved 95.5% test accuracy in 250 epochs while significantly lowering memory and compute requirements, making it suitable for edge deployment.

Comparative Analysis of LLMs

Tech Stack: Hugging Face, PyTorch, LLMs

Implemented BERT and GPT from scratch, fine-tuned on WikiText, SQuAD, and CNN/DailyMail. Achieved 1.67 (BERT) and 2.97 (GPT) loss, highlighting strengths in QA and summarization with modular inference pipeline.